Last Updated on April 13, 2026 by admin

ChatGPT’s Memory Upgrade: A New Chapter in Conversational AI

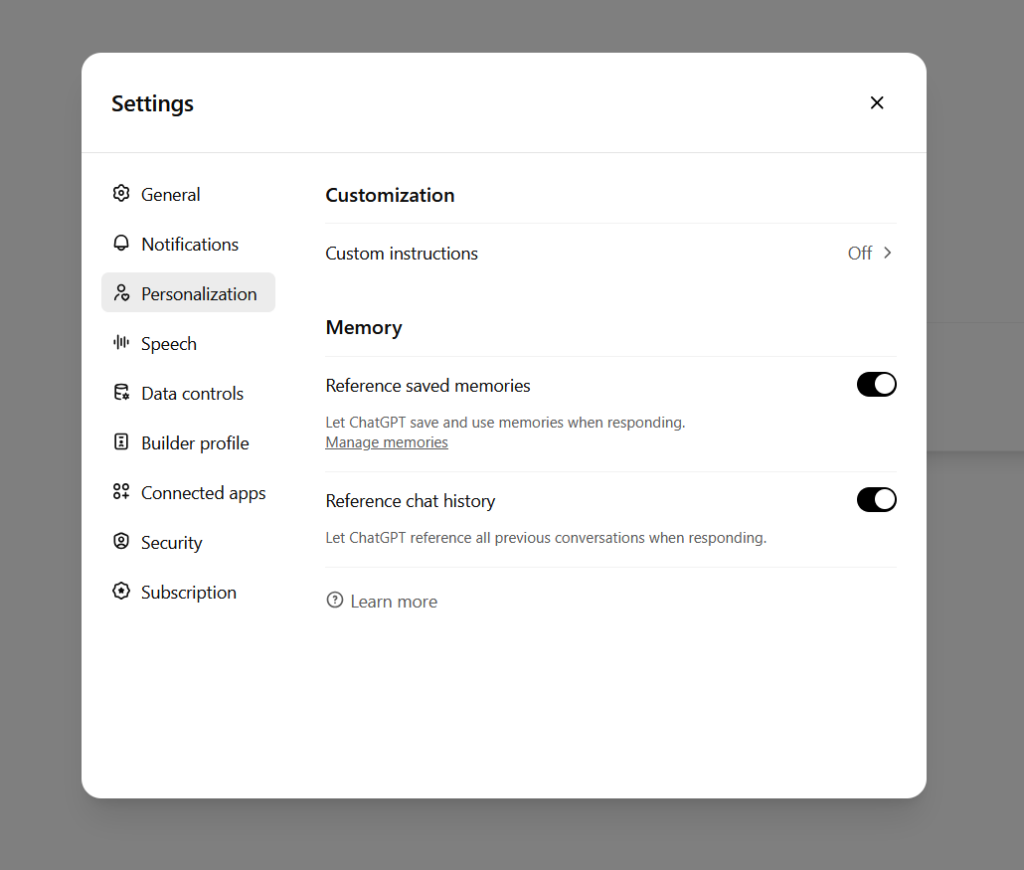

ChatGPT has introduced a major shift in how it interacts with users: now it can remember and reference all your previous chats. This change turns what used to be isolated sessions into a continuous conversation, letting the chatbot build context over time. With this memory capability, the assistant is no longer starting fresh each time—you carry your history with you—allowing for more personalized, coherent, and context-aware interactions.

How Persistent Memory Might Work

To provide continuous conversational context, the system likely stores information from past conversations—preferences, names, recurring themes, styles, private details that you choose to share. This memory is then accessible in future chat sessions. The AI can draw on past discussions to improve responses, recall when something was mentioned, or avoid repeating questions. Technical design would have to balance storage, privacy, data retrieval speed, and the ethical handling of retained user data.

Memory may include details such as a user’s favorite topics, writing style preferences, project ideas previously discussed, or personal schedule if relevant. The system probably also has mechanisms to determine which memories to keep and which to discard—old, irrelevant, or sensitive data might be pruned or controlled by the user.

Benefits: More Natural, Less Repetition

One of the most immediate benefits is more natural conversation. Often users find they have to repeat themselves—re-explain background, preferences, context for a project, or what they’re working on. With memory, the AI can recognize prior information and avoid asking redundant questions.

Users working on ongoing tasks—writing a book, developing a business plan, studying a subject—could find this feature especially useful. The assistant can build over prior work, recall earlier drafts, reintroduce suggestions made before, and follow through with coherent advice across sessions.

Personalization improves too. Over time, ChatGPT could tune its responses to your tone, interests, knowledge level, and even preferred formats (e.g. concise bullet points, long explanations, technical or layman). It can adapt not just to “what you ask,” but “how you like to be helped.”

Risks, Privacy, and Ethical Considerations

Memory is powerful, but it introduces substantial risks. Retaining personal data over time means more to protect. Sensitive personal information could be stored—health data, financial info, personal beliefs—that if exposed could be harmful.

There’s the question of consent: which memories are stored automatically, which require opt-in? Users will need clarity and control over what’s remembered, for how long, and how it’s used. Mechanisms to delete specific memories, clear all memory, or restrict what kinds of data are saved become essential.

Bias and error can also accumulate. If ChatGPT misinterprets or stores incorrect information (e.g. a misremembered fact or preference), future responses might propagate those errors. Feedback loops might reinforce inaccuracies.

Security is another concern. Data stored on servers becomes a target. Strong encryption, access controls, anonymization, and transparent data management policies will be necessary to reduce risk.

Impact on User Experience

For many users, this memory upgrade could make interactions far more efficient. Imagine returning to a conversation about a diet plan, or a creative writing project, and having the AI remember your prior prompts, the style you like, even what you didn’t like. The experience feels more like collaborating with a human who remembers past sessions.

For professional users—writers, developers, educators—memory can reduce friction. There’s less need to carry forward notes manually, re-brief the assistant, or save logs for reference.

However, some users might feel uncomfortable giving up persistent data. It might feel invasive if the AI references something personal unexpectedly, or if the storage seems opaque. Trust will depend heavily on transparency: what is stored, how is it secured, can you forget.

Control, Transparency, and User Agency

A well-implemented memory feature should come with strong user controls. Users should be able to view what has been remembered, edit or delete memories, turn off memory entirely, or limit memory to certain categories (e.g. creative projects, preferences, none).

Transparency is critical. Users should clearly understand what data is being stored, how it is used, and where it is saved. Policies about data retention periods, deletion protocols, anonymization, and third‑party access need to be communicated in an understandable way.

User agency also involves opt‑in choices. People should choose whether they want memory at all, and possibly choose levels (full memory, limited memory, no memory). This is especially important for sensitive personal data.

Comparisons: How Memory Stacks Against Other AI Assistants

Some other AI systems have had memory or long‑term context for a while—once used in premium tiers or enterprise tools—but mostly in limited or narrowly defined ways. What’s different here is the promise of memory that spans all your chats, across sessions, in general usage.

Competitors might provide persistent project history, notes storage, or context recall, but often with explicit triggers (user uploads, saved files). ChatGPT’s implementation, by memory across general conversation, raises both power and risk.

Also, other assistants might impose stricter privacy frameworks or less aggressive retention. It will be interesting to see how ChatGPT balances robust memory with private, secure, user‑first policies compared to alternatives.

Possible Use Cases

Ongoing creative work such as writing novels, songs, screenplays could benefit, where style preferences and prior drafts matter.

Learning or tutoring: tracking what you have asked, what concepts you struggle with, what style explanations help you most.

Professional tasks: drafting emails, reports, proposals—having the assistant know your voice, prior drafts, context saves time.

Personal management: remembering routines, preferences, plan reminders, maybe travel itineraries or recipes.

Casual conversation, journaling, exploring new hobbies: memory could help in slowly building richer interactions, recognizing recurring themes or projects.

Challenges in Implementation

Technical infrastructure must scale: storing and retrieving contextual history for potentially millions of users is no small task. Latency, efficient indexing, and retrieval while keeping privacy and data segregation will require engineering rigor.

Determining what to store: not all conversation data is equally useful. Some is sensitive, some is ephemeral. Choosing retention policies, memory decay (i.e. forgetting unused info over time) may help manage utility vs risk.

Moderation and filtering: memory may store content that later becomes problematic. Need ways to review, flag, remove memories that violate policy.

Balancing personalization vs safety: a memory that is too “sticky” might lead to inappropriate references or reveal more than a user intended.

Broader Implications

Memory changes the model of interaction: from stateless Q&A to ongoing relationship. This shifts expectations of what conversational AI can do. Users might start expecting more coherence, follow‑ups, ability to recall earlier threads.

Potential for emotional attachment or anthropomorphism could increase. If the AI recalls personal details, users might feel more connected to it, for better or worse. This could affect user behavior.

Regulatory interest will likely intensify. Data protection authorities may examine how user data is stored, used, consented, and protected.

Ethical AI debates—especially around privacy, autonomy, control—will gain new dimensions.

What Users Should Do

Review memory settings as soon as feature rolls out. Decide how much memory you want ChatGPT to have.

Be thoughtful about what you share. Even if data is secure, any persistent memory means your past words might be referenced later.

Use deletion and editing tools. If something has changed—preference, project, or belief—make sure the memory reflects current reality.

Maintain awareness of potential errors. The AI remembering is great, but it will not always remember correctly. Be vigilant.

Keep backups of important work externally. While memory helps, do not rely solely on AI memory for critical record keeping.